Bubble Politics

AI progress will survive market corrections. But AI policy is overexposed.

For any new technology, anxious critics like to reach for a good hype cycle story. With AI, shallow attempts at this frequently fly in the face of reality: Quite clearly, many AI investments are quite a bit more material and robust than tulips and subprime paper. But the fact that it’s foolish to dismiss AI as ‘only a bubble’ makes it a bit harder to spot a kernel of truth: On top of a lot of prudent and strategically justified spending, AI has seen an influx of overactive investment and dumb money.

Continued success of these investments hinges on continued high levels of political support, and requires very specific AI futures to be profitable, When some future paths close and the boosterish vibes diminish, many shallow investments will run. Once that happens, the vultures will try to descend on the still-quite-alive body of frontier AI. In the ensuing chaos, I’m confident core investments into AI progress will remain intact. But I worry about the policy conversation derailing.

More than any market, the AI policy conversation is dramatically overexposed to the risk of a ‘vibe bubble’ bursting: most frontier AI experts have established their credibility by being right about AI progressing faster than expected. Their reputations, their agendas, and their policies are at risk as soon as policymakers start thinking that some sort of bubble has burst. I think that could happen soon.

On Bubbleology

People like talking about bubbles in AI for reasons that often have very little to do with factual trends. It makes them feel vindicated about being late to the party, and relieved that they aren’t missing much. It’s also one of the last remaining straws for holdouts of the ‘AI is not a big deal’ faction to hang on to. On these grounds, I feel like many colleagues working on frontier AI policy dismiss ‘bubble talk’ very quickly. But I think that’s short-sighted: There can be a bubble on top of a very real phenomenon. And because talk affects public perception, which in turn affects political support, and then spending decisions, mere “talk” of a bubble can very easily manifest radical market corrections.

This piece, therefore, is an invitation to develop some contingency plans in the face of a superficial bubble and the political effects of its potential burst. Such a bubble is primarily shaped by the volatility of hype-driven investment capital that has, by happenstance, latched onto the very real trend of progress toward transformative AI.

That makes it entirely independent of ‘AI being a big deal’ or even ‘transformers or LLMs being a big deal’. Accepting that dissonance is difficult – a lot of hype-grabbing headlines, and some intelligent analysis, has recently been dismissed by AI-pilled observers because they are perceived to be in tension with prospects of very fast AI progress. I won’t offer my own half-baked financial analyses, but I will offer three reasons that might allow you to reconcile your optimism in AI progress with taking recent bubble talk seriously.

The Shape of the Bubble

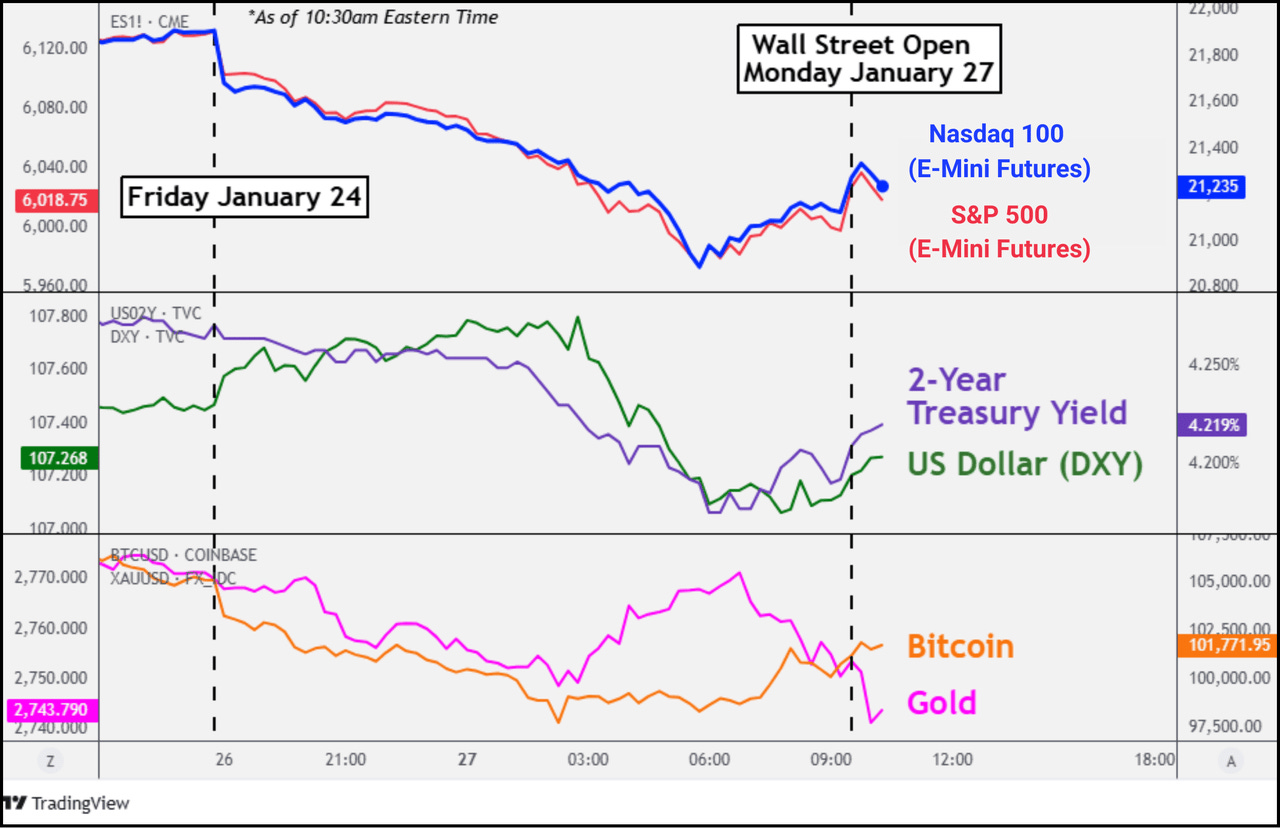

Quite generally, AI investment imperfectly tracks the pace of AI progress. That is almost necessarily true, because you can’t actually invest into ‘AI being a big deal’. That is one of the reasons why the original LessWrong crowd from 2015 is not filthy rich. What you can invest in is things more or less vaguely correlated with ‘AI being a big deal’; such as GPUs, the infrastructure you need to run them; or companies that do a lot of business in some part of the AI value chain. Some of them are better proxies than others, and not all of them track the same thing. Between the stock market overreaction to DeepSeek R1 and its underreaction to the announcement of OpenAI’s o-series and the associated inference scaling paradigm, everyone should have learned that lesson by now — and it should make you less optimistic about the correlation between ‘AI is big’ and ‘investments work out’.

Conflicting Investments

Even worse, a lot of AI money is in conflicting investments. If some of these investments succeed, that necessarily means some others fail - they hinge on mutually exclusive scenarios. As soon as it becomes clear we’re on a path to one or the other scenario, excluded scenarios suffer fire sales. Note some examples:

As I’ve written before in some detail, two stories of datacenter growth compete: Either some countries will be inference havens for the entire world, concentrate a lot of compute capacity, and service the entire world. Or every country needs large amounts of sovereign inference capacity. But the current market prices in both the UAE’s oil state ambitions and the middle powers’ sovereignty projects simultaneously.

A similar logic arguably applies to AI model developers and ‘wrapper’ companies. If AI model developers are huge companies in 10 years, it’s because they’ve managed to capture a large part of AI-driven revenue by constructing moats and resisting commodification. In such a case, ‘wrapper’ companies would suffer, because their specific product features would be outcompeted by the general models’ functionalities, and vice versa.

This is where GPT-5’s release fits into the story. It signaled a decision by OpenAI to give the title of GPT-5 not to an improvement in frontier AI capability, but to an improvement in product and user experience – directionally indicating an interest in capitalising on ~current capability levels over immediately pushing the public frontier.

I firmly believe not all bets on different versions of ‘AI is really big’ can pay out at the same time. As the corridor of likely futures keeps narrowing, it excludes more and more positions, and sell-offs triggering bubble bursts become more likely. Of course, as options are excluded, AI investment could in principle just move to other players within AI, but that’s where the next two reasons come in:

Reliance on Government Support

Second, the value of AI investments is dependent on government support, and therefore much more susceptible to shifts in public and political perception than most areas of investment. Continued AI growth relies on the government being somewhat convinced that AI is a really big deal, because the AI sector faces grave regulatory risks and burdens that need government support to overcome.

This is what has informed past choices to waive otherwise-burdensome licensing and energy limitations, moved the needle on the application of copyright law to training, and generally motivated full-throated government support for the ambitions of major AI developers. As a result, investors have so far been somewhat certain that little regulatory risks affected their AI positions - quite the opposite. Moreover, governments themselves are keystone customers and conveners for AI investments. Much like it’s a big problem for nuclear energy providers if the government thinks that nuclear power probably doesn’t work, AI takes a particularly big hit from rising public and government skepticism. If it becomes unpopular to announce datacenter projects, to announce huge AI deals with the UAE, to talk about US leadership in AI innovation and the prices you’re willing to pay, the government might just stop – removing a critical source of continued AI enthusiasm as well as a critical buyer and convener of AI and its infrastructure.

Because of that reliance on public and political opinion, small hitches in AI hype can spiral quite quickly: If ‘AI winter’ vibes settle into D.C., political support and vocal boosterism starts to dwindle; in turn reducing willingness to continue boosting AI technology and raising investor worries about continued regulatory support. Many readers will share my experience that already now, you get a lot of policymaker questions about GPT-5 being a flop and what that means for AI.

Bad Bubble Optics

Third, AI just really looks like a bubble. Plenty of intelligent analysts have made the general case here: tech growth makes up a major part of general US economic growth, tech and AI stocks make up a bigger and bigger part of major indices and portfolios, and AI investment is reaching levels comparable to the major bubbles of the past. Whether you take these arguments seriously,I suspect that enough people holding AI investments do. Bubble talk might be making them nervous and inspire them to diversify out so they can hedge against the burst and realise gains while they still can. Not everyone who holds money in AI is a ‘true believer’; and even believers can start to waver once numbers turn red.

These points together make for a clear story that requires no pessimism in AI progress: As the future of AI systems becomes clearer, some specific AI investments turn out to be untenable. Resulting market corrections spiral by swaying vibe-based public and political perceptions and routing nervous investors, creating the public perception of ‘the AI bubble having burst’.

So much for the shape of the bubble. I think AI will continue even if it bursts, and it will still change the world, soon. First, I think relevant progress is being driven by the big developers, and I’m not so sure that they’d be hit by the bubble the hardest: they seem least contingent on specific scenarios; and their progress in chasing capabilities seems somewhat well-insulated. Yes, their valuations would suffer, their compute access would get pinched, their talent access would dwindle a little bit. But a lot of talent are missionaries not mercenaries, and the compute buildout funding for the next few years is mostly committed anyways – arguably, all the compute to build “AGI” is already in the pipeline. There’s no stopping progress toward advanced AI by bursting the bubble. Which means we’ll need good AI policy regardless.

AI Policy Is Overexposed

This is where the bubble becomes dangerous. Just as the market is overexposed, so is the AI policy conversation. I think that almost all good policy debates that take this moment seriously are built on the directional understanding that ‘we’re early’: that AI is going to be bigger than the mainstream anticipates, and that being right about AI means predicting its’ effects are not ‘priced in’ by our markets and institutions. What happens if a bubble burst becomes ostensible evidence to the contrary? What happens if adversaries aggressively present a bubble burst as a reason to dismiss thoughtful policy? I suggest there are four consequences at least.

The most effective ambassadors of taking AI seriously take a credibility hit. As I’ve discussed previously, a convergent goal of basically anyone in AI policy has been to argue that AI will be more transformative and disruptive than policymaker consensus implies. This has led basically anyone working on frontier AI policy to shy away from indulging more pessimistic notes on AI progress in public and political communications, and instead motivated repeated featuring of the grandest reads of capability evaluations, investment numbers, and government support. If market corrections make this one-sided picture less sustainable, the credibility of advocates who have to change their tune suffers. Policymakers do keep receipts, meeting notes, and policy memos, and they will cross-reference; any discrepancies will mean hits to credibility. AI developers are arguably most exposed, as they’ve indulged in the most optimistic predictions on the most public platforms. But none of this is good news for anyone in frontier AI policy: even today, policymakers are still not taking AI as seriously as they should – with high costs to the feasibility of sensible frontier AI policy. Any setback in public and political perception hurts everyone here.

The credibility of AI-boosting policy takes a hit. Understandably, AI investment as a whole has been flagged as a national priority worthy of exceptional treatment, ranging from permitting to investment coordination to strategic subsidies to the cross-cutting accelerationism of the AI Action Plan. These policies were sold on a vision of inexorable technological progress creating both economic dominance and national security advantages. A market correction hands ammunition to every constituency that lost out in these policy fights, whether that’s environmentalists, copyright advocates, big tech skeptics, or organised labor. They'll point to (temporary) red numbers as vindication that the whole enterprise was captured regulators chasing Silicon Valley phantoms. This sort of evaporation of political cover can move policy conversations very quickly – especially in AI, where we’d expect the vindicating returns to the political investment to occur later than this first wave of backlash.

In the same vein, the credibility of big-ticket safety policy takes a hit. The big - ‘catastrophic’ - safety risks frequently require a strong belief in the most optimistic progress trajectories. The viability of safety policy is already hanging on by a thread, in part because it lacks a political home: on the right, people disagree with the pro-regulation conclusion; on the left, people disagree with the Silicon Valley-associated premise of fast progress toward genuinely transformative AI – much more on all this here. By providing another excuse to dismiss safety concerns, a market concern exacerbates the coalitionary difficulties of the safety movement.

Anti-AI populism becomes much more attractive. There’s already a latent threat of markedly populist responses to disruptions from AI: Both the MAGA right and the populist left have sympathies for anti-tech sentiment expressed through regulatory crackdowns and more. This can manifest in the context of jobs, it already manifested in striking down the state law moratorium, and it can get much, much worse come 2028. One of the few lines of defence against the populist turn is that so far, serious people on AI have mostly been right about the effects, compelling the current administration to take its potential risks and benefits very seriously. But a story about how ‘the bubble burst’ is all the cloud cover the populist platform needs to argue that serious claims about the effects of AI should be dismissed. And it might be all the excuse a savvy politician needs to galvanise the debate, run with the populist policy, and disrupt the broadly reasonable AI policy environment we’re in right now.

Bracing for Burst

What might we do about this? Frankly, the bubble conversation has begun, and it’s a little bit too late to hedge yourself out of the overexposure. My read is that this is what Sam Altman is trying to do by suggesting there’s a bubble himself: he’s trying to maintain credibility by distancing himself from his boosterist heritage, creating quotes to point to once sell-offs begin. Like many of his past PR gambits, I feel like this is directionally clever but fairly obvious to policymakers, and it isn’t working very well.

It’s probably not even worth it for most people to start collecting receipts and pre-registering skepticism. I’m aware that the authors of landmark predictions have clarified they think everything might happen a bit later, actually. I know that some observers actually only predicted ‘transformative AI’, but not ‘superintelligent AI’; that they only believed in scaling compute, not in scaling LLMs; and so on. But that nuance hasn’t made it to the public or political understanding yet, and I don’t think it will. Because it hasn’t, any attempts to introduce it now just fuel the bubble talk instead: AI skeptics triumphantly point at the hedgers and cite them as further evidence of the imminent collapse.

If hedging doesn’t work, what then? My suggestion is that you should - very actively choose to - do nothing. Not everyone has to find a strategy to make every event good for them. To the extent that your past platform had been contingent on a very aggressive view of investments and AI progress, I propose that, in case of a bubble burst, you just go into hiding a little bit, and reemerge when the conversation picks up again. Any appearance that reminds people of what you used to say, that freshens up the contrast, just hurts credibility in these cases. How you react to bad PR events can make or break your political viability. As I’ve noted at the top of the page, there is a certain pedantic insistence inherent to a lot of AI policy conversations: ‘No, dear policymaker, you misunderstand: I never said the bubble wouldn’t burst, I just said that [some vaguely correlated technical trend would continue]’ might be true. Saying it, publicly, repeatedly, would be a mistake.

Come time, a little bubble burst could even prove productive as long as serious people maintain their credibility. I think divorcing the frontier AI policy conversation from product trends is probably already overdue: the product view conceals some of the most substantial risks, like from in-house deployments at major labs; and it turns out to be an insufficient framework for passing useful regulation. I think for all these reasons, it makes a lot of sense to be prepared for how a market correction might look, and how to take it in stride.

Will this all come to pass? I don’t quite know. I think it’s very plausible that it does not: that sold-off positions just get reallocated within the AI field quickly, that even though the makeup of the AI portfolio changes, its volume continues increasing. But I think frontier AI policy advocates of all stripes should be very aware that AI can be as big a deal as we all think – and that a bubble around it bursts anyway, upending the policy conversation. You should take that prospect seriously.