Seductive Salience

AI politics will be about politics, not AI

It’s long been received wisdom in AI policy that we are facing increased ‘politicisation’: as artificial intelligence systems continue to have greater and greater impacts on all of our lives, the policy questions related to them would become more publicly and politically salient. Speaking in terms of issue polls, you would think that ‘AI’ would soon rank in salience next to economic mainstays like the cost of living and cultural mainstays like abortion; speaking in terms of campaign dynamics, you would think that it’s the AI section of the stump speech you should be lobbying to change. And on this view, getting Bernie Sanders to talk about AI safety counts as a win.

That vision of AI politics has informed a corresponding theory of AI policy advocacy across the field: increased salience will bring policymaker interest, and policymaker interest will make determinations based on the public’s thoughts on AI policy. Many tactical decisions follow from that: perhaps it’s in your advantage to stall until there’s greater salience that supports your policy idea, perhaps it’s in your interest to hasten or cause salience through public advocacy, and so on.

I think there’s something off with that understanding. My claim is the opposite: once an aspect of AI—its job impacts, its misuse risks, and so on—reaches high political salience, AI politics becomes volatile, captive to broader societal moods, and disconnected from the merits of the underlying policy. AI is a complex technology and its effects diffuse through complex systems, so easy stories about ‘what AI is doing’ can quickly come to dominate the discussion—some correct, some not. As soon as the prospect of AI job losses becomes salient, unemployment and macroeconomic trends from any source will be pinned on AI; as soon as AI becomes a fixture of daily life, cultural grievances of all kinds will be attributed to AI regardless of any factual link. High-salience AI politics is high-volatility AI policy. Majorities and initiatives end up governed by what happens in the CPI more than what happens in the CLI.

The strategic consequence for AI policy operators of all stripes is twofold: You should keep the policies that require careful precision out of the limelight as much as you can—by dealing with them beforehand or resisting the temptation to draw in broader coalitions. And you should prepare for the inevitably high-salience issues by pre-staging policies that fit the political attractors you expect of future politics.

Fog of War

I think that, a few years from now, it will be entirely clear to much of the electorate that AI is a big deal. Even if they don’t quite know why, the signs will be hard to miss: some of the most valuable companies in the world will be AI developers, datacenters will continue to come up everywhere, the stock market and the headlines will move on every shift inside the AI industry. But that does not mean that the actual impact of AI will be very clear, or that the salience of ‘AI is big’ corresponds to salience of the factual ways in which AI is big. That’s true for the positive effects as well as the risk.

As for the positive, general-purpose technologies’ effects are usually quite diffuse, surfacing through broad-based productivity gains. An increase in GDP growth, and even the practical effects of that growth for individual stock portfolios, tax bases for redistribution and so on is vaguely attributable at best. The same goes for innovations that travel through long pipelines before they reach consumers as surplus. Pressed for examples, AI boosters usually name faster drug discovery and lower everyday friction from agents working in the background as the positive effects of AI—neither of which will make the tech itself particularly tangible.

People might vaguely feel that ‘things are good’, but that’s a far cry from crediting AI with the impact it might have. To make matters worse, there’ll be a salience gap between the positive and negative effects: narrow stories of localised disruption, such as the kinds of temporary displacement that even the biggest AI boosters expect, will be much more visible. The exception is, in theory, direct consumer use of AI—but even today, a lot of people use AI a lot, and that doesn’t seem to put a dent in the horrible approval ratings of AI technology in general. In the long phase ahead where AI is an important economic input, but not the only thing that happens, you cannot bet on public salience appropriately pricing in the positive effects of AI.

It’s just as unclear that the actual risks will make it into public awareness, though. Many of what I consider the most pressing risks of advanced AI—misuse in the domains of cyber and bio, eroding social institutions without replacing them, unpredictably agentic behaviour by advanced models—don’t provide a neat ramp to salience. Things might go well until they don’t, warning shots might be hard to read, societal effects will only take root with massive delays. To make matters worse, there’s a sticky tendency of AI critics to also be AI skeptics. Many—not all—mainstream voices most inclined to worry about AI are also most receptive to the version of AI criticism that paints it as a scam rather than as something genuinely powerful. The notion that tech elites are boiling the oceans for nothing plays better in skeptical milieus than the idea that AI is an awesome technology with a thorny risk-benefit ratio. People will understand AI carries risks, but might deeply misunderstand how.

Frame or Policy?

The most popular versions of the anti-AI-case, once it reaches high salience, will be tied to whatever the dominant political attractors of the moment are. Economic anxieties can be linked to AI by further emphasising near-term job displacement concerns; anti-Trump sentiment on the left can be linked to the administration’s close relationship to tech; anti-big-tech sentiments on the populist left and right can be linked to the old social media giants’ role in AI policy; child-safety concerns make for excellent media hooks even where the underlying technical fixes are easy. If you’re a politician who is starting to see that an issue is big, you think about how to best link it to the overall thrust of your work. That rarely affords the luxury of swapping to novel threat models or different coalitions quickly. Instead, it means that a mainstay of the anti-capitalist fight like Sanders becomes a pause advocate, an anti-big-tech advocate like Hawley becomes an AI lab critic, and a culture warrior like Pete Hegseth proclaims the Tenth Crusade to wrest the Pentagon from Dario Amodei’s clutches.

Given that, AI becoming vaguely unpopular and facing pro-regulatory momentum seems like a robust prediction. But how unpopular it will be might only be weakly correlated to the actual state of the risk landscape; it will turn instead on two things. First, how well does the story linking broader grievances to AI stick—i.e., is it worth it for mainstream policymakers to talk about AI as a means to make their actual main political point about economic or cultural issues, or is there a better way to talk about them? That has much more to do with what other news stories dominate than with AI itself. And second: provided the link sticks, how is the mood around these underlying grievances—i.e., how bad do Americans actually feel about their economic prospects and the trajectory of the country so that they’d want action on AI to fix it? Neither of these questions is predictable or really tractable to the AI policy operatives currently engaged on either side—operatives that still profess to agree on many fundamentals of AI regulation. That makes surrendering AI policy to these tides very risky.

The example of Bernie Sanders and Alexandria Ocasio-Cortez engaging with the case for AI safety is perhaps instructive. My best attempt to make sense of this is that their wing of the party was going to get to some version of anti-AI sentiment anyway: it maps too cleanly onto their broader case of ‘unmitigated corporations are a threat to humanity’, which has found expression through political advocacy on everything from climate to worker rights. The degree to which this platform sticks with AI will be mostly governed by political imperatives, and its success and salience will likewise have much more to do with what American voters think of Democratic Socialism than what they think of distribution shift.

The involved safetyists have made a real if hard-to-size contribution to this by introducing them to the topic earlier, and adding the Berkeley-inflected spin to early messaging and engagement. I’ll admit that sounds a lot like success. But the policy question is: has that moved the needle either on the policies that they’re asking for (which seem unsound) or on the likelihood that these policies will succeed (which seems basically nil either way). The tactical question, then, is whether this contribution has been helpful as a messaging event. I think the right framing to assess that isn’t winning or losing, but depends on whether you want to increase variance: salience-fishing is a volatility-raising play, and you should treat it as one.

Riding the Dragon

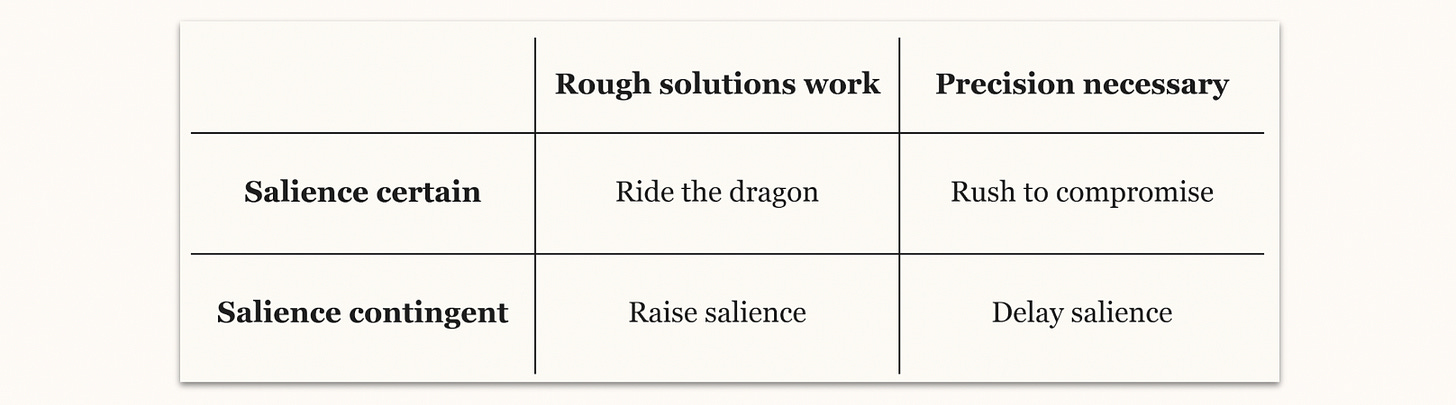

To explain what I mean by that, and to find out when it’s helpful, it’s useful to conceptualise policy work in the shadow of upcoming salience as operating in two distinct categories.

The first category is work that attempts to ride the dragon: accept an issue will be high-salience, and try to navigate it. Two kinds of policy areas fall into this category: those where you can’t avoid salience, and those where you can’t get anything done without salience. They collapse into almost the same thing: whether through strategic campaigning or because the salience breaks through, issues in either of these policy areas face the same dynamics. They are subject to being determined largely by big-ticket macroeconomic trends rather than the merits of the policy case; but they also get far more political punch. There are some things you’ll never get through secret congress—widespread redistribution in response to labor impacts, for instance; here, you need the backing of political salience.

How do you use this category well? Sometimes, you’ll just be happy to spray and pray: point public salience at an issue, hope that the policy that comes out hits the mark. If you’re a safety advocate who thinks that the Sanders-AOC bill sounds like the best we’ve got, you might think frontier safety falls into this category. But if you think good AI policy usually requires more precision, you might instead think this approach mostly works very well for preventing things from happening. The past moratorium fights are instructive instances of this: the defeats of the moratoria ultimately ended up being about everything from artists in Nashville to doomers on War Room, but it didn’t matter much—it was enough that they all vaguely agreed they didn’t like Ted Cruz or moratoria. Now that that’s over, and the legislative question is moving toward ‘what should actually be done?’, the coalition is showing its predictable fault lines: there’s little alignment on what to ask for on the substance, even if the concerned rhetoric still papers over most substantive misalignments for now.

But in other cases, you might want to think about upcoming public salience as something to anchor or steer. For instance, many in AI agree that political appetite for policy action on labor impacts is coming soon. If that’s true, the right way to deal with that is not to waste a lot of money and effort on spreading counterintuitive messages that have no political home, but to reason backward from what policymaker incentives will be once the salience gets there.

For the jobs issue, that might mean that, as industry, you want to develop, test and poll a message that politicians who want to stand by you can say without making a fool of themselves: not a maximal renunciation of the jobs impact, but a defensible way to hold the line against anything from token taxes to fully automated luxury communism. ‘Yes, we do believe there will be some displacement, but we’re approaching this phase as a momentary disruption on the way to better jobs—and so we’re providing generous-but-time-limited welfare expansion’ doesn’t sound absurd to me as a start.1 There are equivalents in the frontier safety conversation short of maximalist oversight that others have written eloquently about in ways that lend themselves to honest conversation with intelligent voters.

On the other side of the discussion, if you’re someone who wants to make active labor policy, you might not want to spend your time explaining to people why the jobs crisis is really coming, but instead pre-seed constructive ideas of how to deal with it once it comes around: you might for instance spend some time explaining to likely jobs populists why calling for higher corporate income taxes across the board is equally politically effective and much better policy than narrowly-targeted token taxes. Before the salience comes, either of these interventions is cheap: they merely require targeted policy development and some surgical political spending to circulate the ideas, no big salience campaign, but they can still anchor the policy conversation.

Borrowing Some Time

The second category is work that attempts to avoid salience. These are policy areas where you feel like directionally good is not good enough—where you need very precise interventions, and the second-best solution inverts the value of the policy. Frontier safety regulation might be one such area, where I believe most heavy-handed solutions quickly push deployment into labs, reduce democratic leverage over future developments, or overindex on incidental technical realities of the moment. I think international treaties are also arguably like this: as I’ve argued in the past, they require a lot of sophistication in the negotiation process that doesn’t lend itself well to quickly accommodating political demands—that’s why I wouldn’t place AI safety into the ride-the-dragon column. To me, a popular movement for a pause seems doomed to fail, and any reasonable progress toward safety cooperation is instead made through the high-level convening circuit far away from public attention.

Kept out of the spotlight, compromise on such issues remains possible. I won’t reiterate the case for that—you can find it in past pieces on this publication, for instance here and here.

AI technocrats on both the industry and pro-regulation sides have real ability to keep the salience of such issues low for some time, primarily through their political spending arms. It’s not that hard to convince a politician to talk about the second-biggest issue instead, to let sleeping dogs lie for a time: by definition, they won’t have a big stake in a topic before they raise it. Yes, it feels more satisfying to spend that money to start a big fight, to get a strongly worded draft bill and a televised press conference out of riling a candidate up. But in many cases, the more effective way to spend might be coordinated: to keep policymakers from moving the issue into political debate to retain the space for compromise. Politicians frequently lead political salience, and political spending can in turn inform which issues they decide to elevate. You can’t always prevent an issue cluster from rising to political salience altogether, but you also shouldn’t sell yourselves short: the current cast of AI policy controls enough resources to nudge the salience trajectory if it so chooses.

Now I know there are qualms you might have about this position on democratic grounds. My argument, after all, is to delay a certain amount of public say on the issue. I’m sympathetic to this concern, and it keeps me up, too. But I’d like to propose to you what I’ve landed on: that in a republic, our elected representatives have some prerogative to at least try and figure out a solution in their tenure and term as elected and appointed officials, and that the public ought to then deliberate based on the record they submit. As policymakers are just slowly beginning to grapple with even the possibility of advanced machine intelligence, I feel inclined to give them a shot to begin and finish a body of work in the leadup to the next presidential election at least, and to afford them a low-salience environment while they do. I think you can rest assured that the public will get its day in court on this either way—my suggestion is that this is not about preventing democratic participation as much as it’s about buying some time to clean up our room before the sovereign comes to check in on us.

Compromise Carrots, Populist Sticks

The problem areas, of course, are those over which no current actor has control: those that would require precise interventions, but where you either can’t get anything done without salience or where salience is inevitable. This is a common objection to my starry-eyed hopes for technocratic compromise: ‘No, you don’t get it, the companies don’t want to regulate and they’ll spend big to keep regulation at bay, so we have to raise the salience!’ I think that’s a rational reaction for a regulation advocate. Industry should take note of that incentive structure—not compromising forces the pro-regulation advocates’ hand to everyone’s disadvantage.

That’s because, if industry appears plausibly opposed to all regulatory compromise, it invites salience-raising advocacy from its opposition. I think that’s in turn likely to lead to even worse policy down the road: you trade a few months of being unregulated against much worse regulation later on. The pro-regulation technocratic elements you could compromise with are not willing to destroy your industry as collateral damage outright—but the populist spirits they feel they need to summon if you don’t play ball might be. For industry, that means getting it together and realising compromise just makes things worse down the line. For the rest of us, it means the most effective way to deal with inevitable salience is to hedge for alliances and seed best versions of ideas if you must, but not to accelerate the trend—treat populism as a forcing function for pre-political compromise wherever you can.

I don’t buy that that would be bad for policy outcomes: this is not a situation where scrappy NGOs fight big tobacco. Veterans of past fights often appeal to the lessons drawn from the stereotypical uphill battles against nefarious industry that were only won once the public got its day in court. But that’s not our situation today! Good policy ideas are coming out of a well-funded ecosystem that is only picking up steam, and reasonable policymakers are in the room. And what the pro-regulation side lacks in pure funding muscle, it arguably makes up in D.C. structures and projected future salience even today. We can compromise while seeing eye-to-eye in the shadow of approaching salience without needing the wave to break first. I’m not ready to accept the retreat into tired tribalist lines as necessary just yet.

Going back to the Sanders-AOC-question, which is in many ways an interesting early toy example of the broader strategic question, I think my version of the picture should be clearer now: safetyists have thrown their weight behind the salience-increase by successfully luring the anti-corporate wing of the Democratic party with rhetoric that fits their political priors very well. But the cost of that is imprecision: Sanders and AOC have reached for an ineffective version of an ill-advised idea, a proposal so incoherent that even its would-be supporters have retreated to only defending its ability to ‘send a message’. The political dynamic it contains, as discussed before, is such that high-salience dynamics would inevitably make the policy fall short of the technical precision it would need to achieve its safety-relevant outcomes—though I do acknowledge that those who think we face unbearably high odds of doom will take any progress, however unreliable they can get in an attempt to flip the gameboard.

What could have been the smarter move? To seed compatible policy ideas, to actually work out and test beforehand what smart things a Democratic Socialist could say about AI, workers, redistribution and labor displacement, and then get these ideas in place in time before politicians inevitably reach for the issue. And at the same time, industry could have gotten its house in order and seen whether it wouldn’t prefer to make good policy before Bernie Sanders kicked in the Overton window on AI pauses. That would’ve been real progress.

Outlook

You can’t fight salience, and you can’t change politics. But you can delay and hasten, you can anchor and nudge. Faced with that political toolkit, I cannot help but note the irony that some advocates have chosen a recklessly accelerationist approach to political salience that has awakened a dynamic they can no longer control.

Increasing political salience of issues in AI policy is neither a panacea for pro-regulation advocates, nor is it a hard deadline to get all of AI policy done. But for every AI policy area that gets dragged into the limelight and turns into AI politics, we sacrifice policy precision for high-volatility political will. It’s only very rarely worth it: we should try to avoid it through technocratic compromise wherever we can, and try to anchor the popular conversation through developing and spreading politically suitable ideas where we cannot.

More on that soon!