Can You Poach A Frontier Lab?

A stepwise roadmap for U.S. allies after the Anthropic crisis

Last week, the U.S. government threw into chaos the delicate public-private balance that has until now governed the rise of advanced artificial intelligence. Following an escalating conflict between the Pentagon and AI developer Anthropic, Secretary Hegseth announced that he’d declare Anthropic a supply chain risk, barring them from contracts with the Pentagon or, critically, other defense contractors. The implications are dire: the designation hits Anthropic’s business at a time when they’re gearing up for an IPO built around a story about business deals with governments and their contractors. And as they’re pursuing volatile infrastructure contracts and racing their chief rival OpenAI for the IPO timing, even the fact that the courts might kill the move provides only faint relief. That means the decision goes far beyond the sensible off-ramp of just cancelling federal contracts that had seemingly been endorsed by President Trump shortly before the announcement: the U.S. government is, in effect, trying to derail if not destroy one of its foremost technology companies.

This episode has made observers doubt whether the U.S. government in its current shape is a suitable hegemon over the next years of rapid AI progress. And while I maintain that the American project is the best realistic bet we have, recent days again underline that we’re currently not dealing with nearly the best version of that project. That invites an international angle: is there some way that the rest of the world can simultaneously pick up the slack and gain some leverage along the way? Can liberal democracies elsewhere offer more favourable conditions to concerned frontier developers, creating a hedge for the labs and a counterweight to the U.S. at the same time? I think yes—and though it’s not quite easy, the current window would allow taking concrete policy action in the coming weeks.

Short-Term Follies

It brings me no pleasure to begin this essay by throwing some cold water on the worst version of a decent idea. If you’ve looked at somewhat clued-in Europeans’ social media recently—and increasingly, higher-level chatter—you’ll have noticed a story: poor Anthropic, discarded by USG and temperamentally aligned with the more safety-minded Canadian-European axis, might just up and leave. In other words, people talk about actually moving Anthropic all the way to Europe as an immediate capitalisation on the current window. No, you cannot outright poach a frontier lab tomorrow. I think the idea itself betrays both a misunderstanding of what a frontier developer is and a counterproductive communications instinct.

It’s worth understanding what makes a frontier developer competitive in the next year or two. The relocation discussion mostly treats frontier developers as a software company: a bunch of smart engineers in a lab, and if you can make them prefer spring in Paris over amorphous fog season in San Francisco and the Tuileries over a park on top of a bus station, you can move Anthropic to Europe. This vibes-based model of poaching Anthropic flies in the face of two structural questions: compute and capital markets.

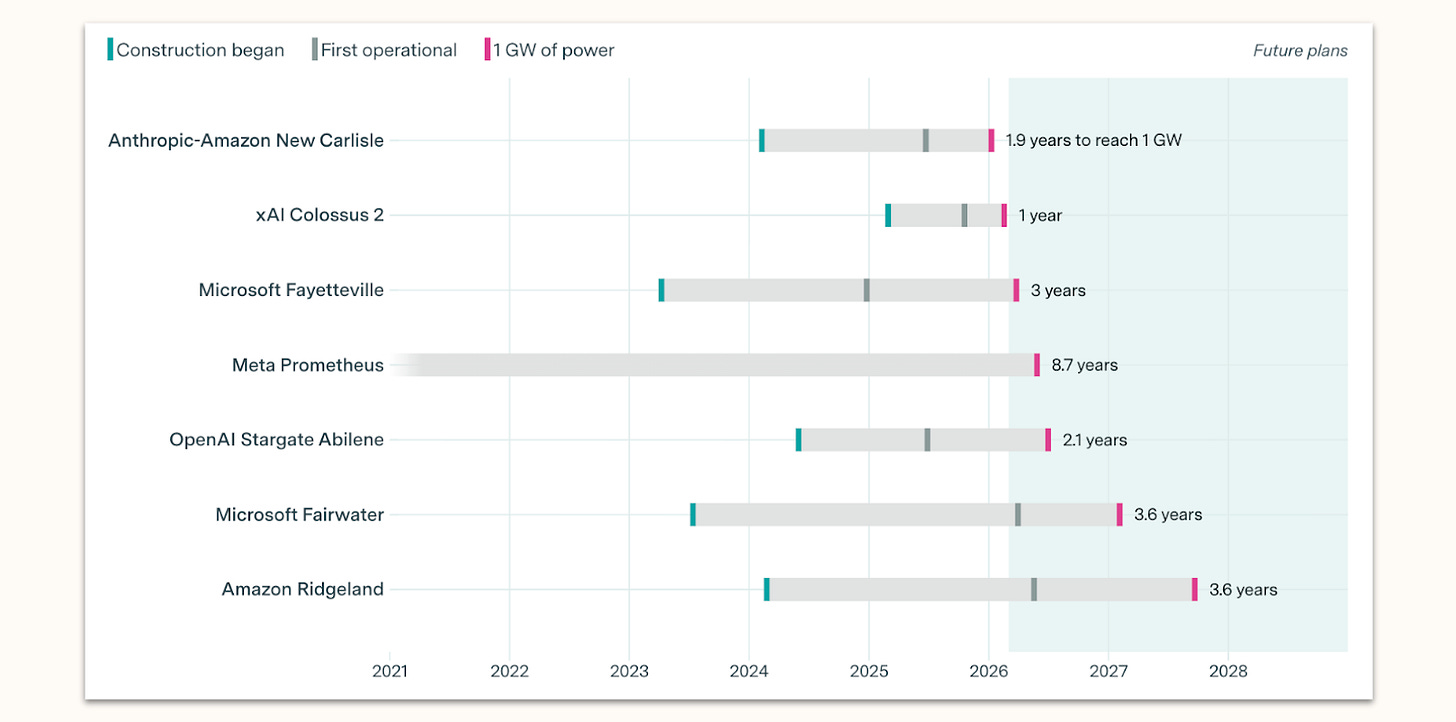

First, frontier developers are fundamentally fairly thin wrappers around available compute: compute already online, and in particular the gigawatt-scale clusters coming online this year and next. And since Anthropic wants to remain competitive with its U.S. rivals, any poaching bid would have to guarantee a similar timeline to deploying comparable levels of computational resources. There is no consortium of Western democracies that can offer remotely comparable compute. Anthropic alone has around a million Trainium2 chips coming online through AWS’s Rainier megaproject—a 2.2 gigawatt campus—with further capacity through Google Cloud and a separate $50 billion datacenter deal. The entire European public AI compute estate, across all of EuroHPC, amounts to roughly 57,000 accelerators. Future plans are late: FluidStack’s 1 GW French supercomputer, the most ambitious European project, remains at the MoU stage; the EU’s AI Gigafactories are a policy concept with a call for proposals scheduled this year and operations no earlier than 2028. Even if you redeployed all of it, you’d arrive years too late and orders of magnitude short. And even if you rallied all political support to start construction on proprietary clusters to attract Anthropic tomorrow, any realistic timeline would still see them online far later than American projects already under construction.

Could Anthropic retain access to its U.S. compute base even if it decided to pursue the kind of visible departure that Europeans are entertaining? I strongly doubt it. The Trump administration definitely has the toolkit to control a now-foreign firm’s access to U.S.-based compute if it decided to do so: both remote access and export of the chips themselves could quite easily be export controlled; the attempts of a U.S. subsidiary of a now-European Anthropic to access AWS compute in America could quickly be curtailed, and so on. Before Anthropic even considered any such defection, the Pentagon already did not shrink from punitive treatment; I think it’s likely they would escalate at the attempt. There’d be a sort of bitter irony in the export control hawks at Anthropic getting the short end of export enforcement, while Chinese remote access remains live—but the same sort of irony applies to the supply chain risk designation, and that didn’t stop Secretary Hegseth, either.

Second, frontier developers’ ambitious spending requires access to extraordinary capital markets. Next to the availability of deeper U.S. financial markets, the particularly important piece of the puzzle here is IPO timing: it would be quite valuable for Anthropic to be the earliest IPO among the big AI companies, to attract big institutional investors and the first wave of zealots in search of direct exposure of their portfolios to AI growth. Anthropic wants this IPO story, and it ideally wants it first. Relocating means starting the IPO story from scratch — reassuring underwriters and investors that the move doesn’t dent projections — while also moving to a stock exchange with far less favourable conditions and available capital. OpenAI would be first, and the Anthropic IPO would dramatically underperform expectations. In short, if you have a shot at being the first AI stock to IPO at the NYSE, you’re not going to swap that for a lukewarm listing in late 2027 at the LSE.

On these structural grounds, Anthropic is deeply committed—dependent, in fact—on processes unfolding in America right now. Anthropic is also dispositionally unlikely to bear large temporary costs to their competitiveness at the present moment: they believe now is crunch time, that the race is heating up and they might even have a leg up heading into recursive self-improvement. For a company as certain that now is the time, defecting from the most attractive capital market and established compute pathways will simply not be an option. In other words: if what they say is right and this is the beginning of the endgame for AI policy, then middle powers are down a rook and should cease attempts to simplify.

An Offer They Will Refuse

You might understand this, and still believe it’s worth for other democracies to reach out and attempt to poach anyway: to put an offer on the table, to increase Anthropic’s leverage, to provide a forcing function for intra-democratic coordination. I believe that would be mistaken: making this question politically salient would be bad for Anthropic and ultimately bad for any progress toward diversification short of a wholesale transplant.

Advocates of this persuasion are misreading Anthropic’s position. Since leaving is not a plausible path — and the administration knows it — the ‘offer’ provides Anthropic with no additional leverage at all. Anthropic in its current PR posture will have to turn down the offer visibly and credibly if this becomes a big story, lest they invite more vicious retaliation from the administration for very little upside. This bears repeating: if you are one of my friends on the middle powers beat and are more enamoured with this idea than I am, please don’t go the way of big public statements, open letters, policymaker communications. Giving this idea public salience sharpens the political conflict in America, helps the administration paint Anthropic as illoyal and anti-American, and ultimately compels Anthropic to clearly reject the idea before there was time to approach it stepwise and with subtlety.

Long-Term Prospects

Stepwise and subtle, however, is a possible way to do this: understand the project of ‘poaching’ a frontier lab not as an attempt to extract value from the U.S., but to diversify the Western stack to make it more resilient to transient political trends and disruptions. My broader claim here is simple: it would be good for the world if a sizeable minority of American developers’ compute, business activity, and government cooperation were located in allied democracies. That could be about Anthropic, but I’d be just as happy with OpenAI or Google DeepMind. In a pinch, I might even take Meta. That outcome is eminently reachable and obviously beneficial in the aftermath of the Anthropic/Pentagon saga—and it’s never been more clear to the frontier developers that some hedging might be in their very best interest.

Why is it obviously beneficial? The general endgame theory here is that the worst version of the U.S. is not an optimal steward of AGI. And while I still think the American project is our best bet to get all this right, I do think there’s a big difference between a good and a bad version of America winning. Next to U.S. domestic engagement, I think sound reasoning on how to affect that delta starts with the question of how U.S. allies can elicit that best version. The fact that AI will not lead to European supremacy is not to say that there is no contribution to be made from the liberal democracies of the world. Western democracies have institutional stability, regulatory predictability, and supply chain security to contribute to an American political system that is increasingly fraying at the edges. And at the very least, the Western allies can build up to be a viable alternative—a backup place for western-aligned organisations to go; a credible threat if the U.S. overreaches. Like my European friends, I want Europe to succeed—and with an increasing number of American friends, I share the view that the most successful American project takes place in a world that succeeds, too.

I’ve mostly argued that we can reach this place through an expansion of the upstream and downstream leverage held by middle powers, thereby cutting them in on AI-driven growth and providing them some independent strategic foothold while still fundamentally providing for deep integration into the American stack. But a marginal increase in U.S. developers’ footprints in middle powers is similarly helpful to national prospects and international balance, along three lines.

Middle Power Upsides

First, if your country hosts frontier developers’ infrastructure, you hold global leverage over the flow of artificial intelligence. That gives you some ability to make credible threats, and inversely makes you somewhat less susceptible to that kind of threat against you: no one can directly cut you off from the compute you need to run at least the models you have access to, and sometimes you can even cut off others’ access. The important thing to note for that latter part is that a large coordinated share of global inference compute gives you global leverage even if you’ve mostly been using that inference yourself: if you have the ability to take 10% of the world’s inference compute offline, the shock to global supply can spike prices and inflict real pain on others as well. Compare this to OPEC countries: their single-digit shares of global oil supply have frequently rendered them less susceptible to superpowers’ plays and more capable to negotiate their own fate.

What does this compute-focused notion of leverage have to do with frontier labs? First, cooperation with frontier developers is among the easiest drivers of major compute build-outs in most countries: developers are exceedingly hungry for compute, willing to enter all kinds of deals to increase future supply, and generally able to, in partnerships, mobilise massive amounts of capital. Compared to the idea of having local European firms or even governments build out the compute, simply making it attractive for the bullish and rich Americans to build in your country seems far easier. To add, your compute is much more valuable if it’s being used for frontier AI than if you’re using it to build the sixth-best open source model east of Lisbon. No global market really cares if the French government turns off its own datacenters, but if we’re talking about Anthropic’s inference stock, things change. You might wonder why a developer would accept this if the turning-off scenario is in the cards, but again: it’s in the cards no matter where your data center is, and the question is if you want all your eggs in the basket that just threatened to designate you a supply chain risk or not.

Second, countries with a substantial frontier developer presence gain the ability to enforce contracts for frontier AI access. I’ve argued in the past in some more detail that importing frontier AI is imperative for middle powers due to the objective superiority of U.S.-built AI, but that access is unreliable due to securitisation prospects, economic pressures and competitive margins. The question then is how to forge contracts for access to American AI that last. The answer is that you need leverage, concretely over the company that might otherwise be compelled to breach its contract for economic or political reasons. If part of the lab is in your country, it’s much easier to ensure that access, because you can at least make sure the resources physically located in your country aren’t used for anything else: fine if you break your contract and don’t service the latest version of your latest model, but in that case your datacenter isn’t running anymore. Very similar logic applies to talent, corporate listings and intellectual property. Middle powers want stable relationships, and the more assets they physically hold within their borders, the more stable these relationships are, because it’s costlier for the labs to renege. That is valuable, not just because of the access itself, but because it allows countries not to run costly resilience strategies–like building their own models–to hedge against being cut off.

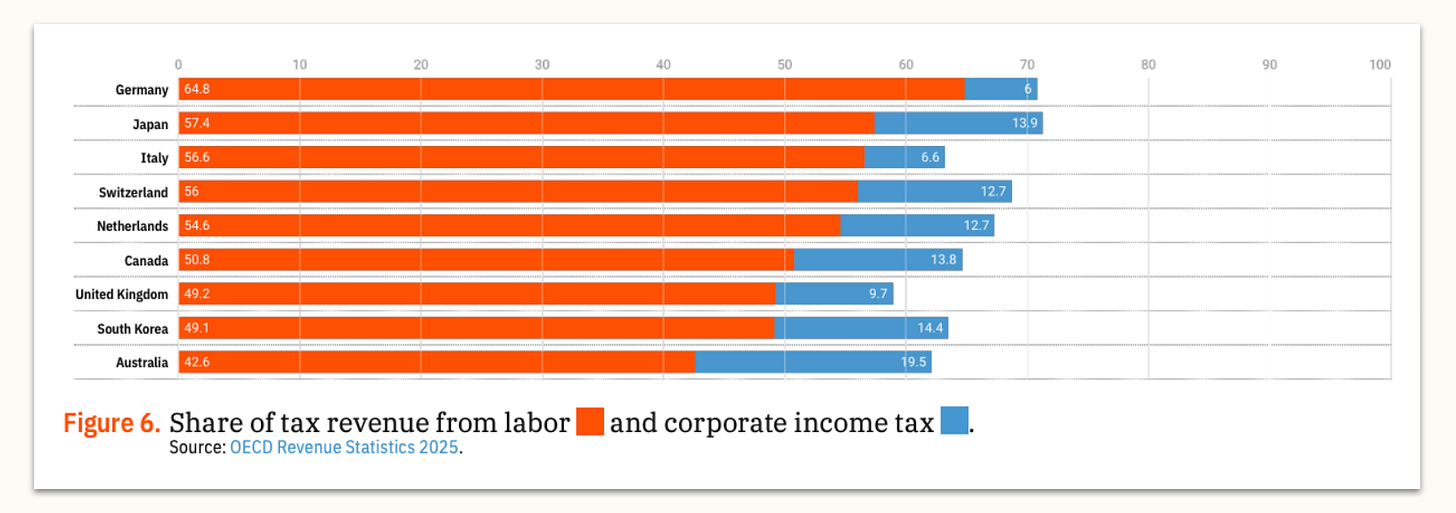

Third, countries with frontier developer presence have a pathway to fiscal stability. Most non-US countries aren’t fiscally equipped for AI-driven labour market disruptions, in part because these disruptions would simultaneously present a policy challenge and also lead to a cratering of income tax revenue. Whereas the U.S. will recoup these losses through corporate taxes levied on the AI agents that displace the human workers, many other countries won’t be so lucky. Locating even some amount of frontier AI activity within the confines of your tax authority is therefore a very attractive fiscal pathway for a middle power vulnerable to this sort of disruption. That is admittedly not trivial—U.S. corporate tax is low, so you’d have to offer the frontier developers sizable tax breaks for a move in tax authority to ever be viable, and so the revenue wouldn’t be that impressive. But still: if a major share of labor income moves to corporate income in the near future, even securing a small slice of the corporate tax for a company like Anthropic is a huge deal for fiscal stability of any middle power bloc, or in fact a broader consortium of such powers.

Solving these three problems doesn’t solve many deeper questions of middle power strategy, of course. They’d still remain susceptible to U.S. leverage, and still not be able to unilaterally exert their will on the trajectory of frontier AI. But leverage is not an all-or-nothing question: every bit of friction and pushback helps, and frontier developer presence helps in the particularly important areas outlined above.

Ways & Means

Even though Western allies are currently not equipped to transplant Anthropic, or any developer, to their borders entirely, the situation in America still enables stepwise progress toward that goal. How? Quite fundamentally, the pathway to getting frontier developers to expand their footprint is to ask them what they want. In that sense, the first step is to get together a coalition of aligned democracies that share the analysis I just outlined, taking stock of the strengths they have and could pool, and then to approach the AI developers and simply ask them under what conditions they’d consider dramatically increasing their presence and footprint.

That said, I think there are measures that make sense to flag early, and that would have to be part of an actually compelling expansion offer. They generally fall into three categories: Specific project-focused state-owned action, general changes to the regulatory backdrop, and efforts at international coordination.

State-owned Action

Keystone developer-government contracts for the use of frontier systems in public administration and national security. Government contracts are (usually) sticky and long-term, an important business anchor as well as an important token of deepening relationships between developers and host countries. Their funding and modalities are in the direct purview of governments, and they can decide to hand them out tomorrow. In the aftermath of the current episode, allied governments could approach Anthropic to use their systems in security-relevant applications — offering exactly the kind of partnership that the Pentagon just reneged on.

Preferential access to bottlenecks. I’ve written before about the positions many middle powers hold on bottlenecks for advanced intelligence: the manufacturing required to turn tokens into real-world value, and the semiconductor supply chain that provides the substrate for AI. Short of aggressively leveraging these bottlenecks, middle powers could use them to steer capacity toward frontier developers they cooperate with: preferential and custom equipment access for ASICs—application-specific chips—for their best friends, preferential integration of developers’ models into AI-empowered manufacturing, and so on. They can offer an accelerated timeline toward vertical integration that allows quicker iteration and perhaps even recursive self-improvement across the stack.

Capital mobilisation to offset the difference in private capital markets between the U.S. and other countries. In particular, two pathways to this capital seem promising: closely involving large legacy firms in middle powers for investments and integrated deals; and leveraging sovereign wealth and pension funds as anchor investments. Funds and legacy corporations are huge untapped sources of capital that are currently routinely underperforming capital markets precisely because they are not exposed to technology growth—rallying them around this plan is risky, but can solve two problems at once.

Regulatory Conditions

Copyright & data protection carveouts: frontier developers that increase their footprint should be allowed to train at least as liberally as they would in America. That would ensure that developers at least have the choice to create some share of their suite of leading AI systems outside of American jurisdiction. Otherwise, even substantial nominal presence would still concentrate the most critical intangibles in the U.S., and little would be gained. The main failure mode to avoid is that developers have a large footprint in other powers, but their important business activities still exclusively take place in America. I’m sure frontier developers have a clear sense of the carveouts necessary to prevent that scenario, which should at least be a strong starting point for scrutiny.

Preconditions for enough compute: downstream of all these questions lies the actual compute question. If you get the capital and integration questions right, developer presence would be likely to translate into compute footprint if the regulatory and energy requirements are met to motivate a buildout. That requires enabling the construction of proprietary energy infrastructure, including both behind-the-meter gas turbines and nuclear SMRs; as well as permissive licensing that allows for quick construction of datacenters. That, to my mind, is the way to think about compute: draw developers’ interests and then get out of their way, not straight-up build it yourself. The alternative is speculative investments with unclear buyers, and so compute is not a first-order policy priority itself.

Attractive corporate tax conditions—competing with U.S. rates, on the understanding that even a discounted share of what could become the dominant revenue base is transformative for middle power fiscal health.

International Coordination

Consortium-building and burdensharing. It’s not quite clear who exactly would make this offer: The EU alone lacks the capital, talent concentration, and infrastructure, but some of the Five Eyes or Pacific allies might be a little too close to America to risk what might be read as a somewhat adversarial play. I think Canada and broader Europe might be a strong starting point, and they should endeavour to include Five Eyes as well—especially the UK, which is well positioned to play a leading role in this coalition. Setting this up is not easy: there are agglomeration effects that cut against distributed gains from coordination. Everyone has to pitch in, but not everyone gets the tax revenue and the immediate leverage from datacenter location. Any alliance would have to complement coordination with robust risk- and benefit-sharing mechanisms that distribute the outcomes of this play across the alliance. Shared fiscal responsibility for the investments, ad hoc harmonisation of regulatory landscapes for the copyright issues, special distribution channels for levied taxes, and so on.

U.S. reassurance. This can’t be framed or come across as ‘we’re taking away your AI developers’. If it is, the administration will react restrictively and punitively in a heartbeat. I think there’s a good substantive argument to be made in favour of this diversification project, ironically especially to the current administration. The Trump administration’s views on AI are fundamentally pro-AI, and soft on restricting the outflow of AI capabilities in general terms. This—and decidedly not questions of rule of law, military use, leverage, or the Pentagon’s choices—have to be the frame. In many ways, pursuing this strategy constitutes buying American: investing into U.S. firms, providing them infrastructure, integrating them deeply into local stacks. Progress along the diversification trend needs to be pursued in that spirit, and therefore needs to happen in close communication with the U.S. government, which should in turn retain the ability to set some red lines for tech transfer and decentralisation. Generally, this will not work if it’s read as something middle powers are doing to the U.S.

The Politics of Poaching

Now I’ve worked in politics long enough to know that if you’re a staffer and take this list into your principal’s office, you’ll have to hand over your access badge on the way out. The ‘local champions’ will revolt, the incumbent ecosystems will curse you out, the nay-sayers will say you’re gambling on American pipe dreams, and well-meaning ‘patriots’ will say you’re throwing in the towel. But the gap between where Western powers are and where they’d need to be to invite a major developer footprint really is that large, and that measures like these really would be necessary.

That is to say: We have a decent idea of what the policies should be, and if we hadn’t, the developers would help us find it. The bigger issue is that you’d need a lot of focused political will for this. That will would have to be based on a clear understanding of the three dynamics I’ve outlined: the leverage, the contractual stability, and the fiscal pathway that frontier developer presence provides. That’s why much of this post is about motivating reasoning. The mechanistic pathways for stepwise expansion of developers’ footprints are much less complicated than explaining to incumbent allied governments why advancing this expansion is in their interest. To my mind, the biggest bottleneck remains making clear my three arguments above to the allied policymakers that would need to push for this play.

Outlook

I still think there’s a window here. Western middle powers have been looking for a promising and concrete play to rally around—and this might be it. As always on middle power strategy, this requires a delicate balance: you have to take frontier AI seriously enough to realise how important doing this is, but not get drawn into delusions of full independence from America.

But if policymakers come to understand what hosting frontier developers is actually worth, they can take first steps today. That would be progress toward a more stable world in which the best version of America could win.